setTimeout, and setIntervalare basic browser APIs that all web developers are well acquainted with. Whentrying to implement something like a self-advancing timer, these timer APIs makethe job easy.Let's consider a simple use case. In React, if we are asked to implement acountdown timer that updates the time on the screen every second, we can use thesetInterval method to get the job done.

const CountDownTimer = () => { const [time, setTime] = useState(10); useEffect(() => { const interval = setInterval(() => { setTime(time => { if (time > 0) return time - 1; clearInterval(interval); return time; }); }, 1000); // Run this every 1 second. }, []); return <p>Remaining time: {time}</p>;};This works great if we are only expecting to show a single timer on the page.What if we have to show multiple timers running on the same page?

Multiple timers

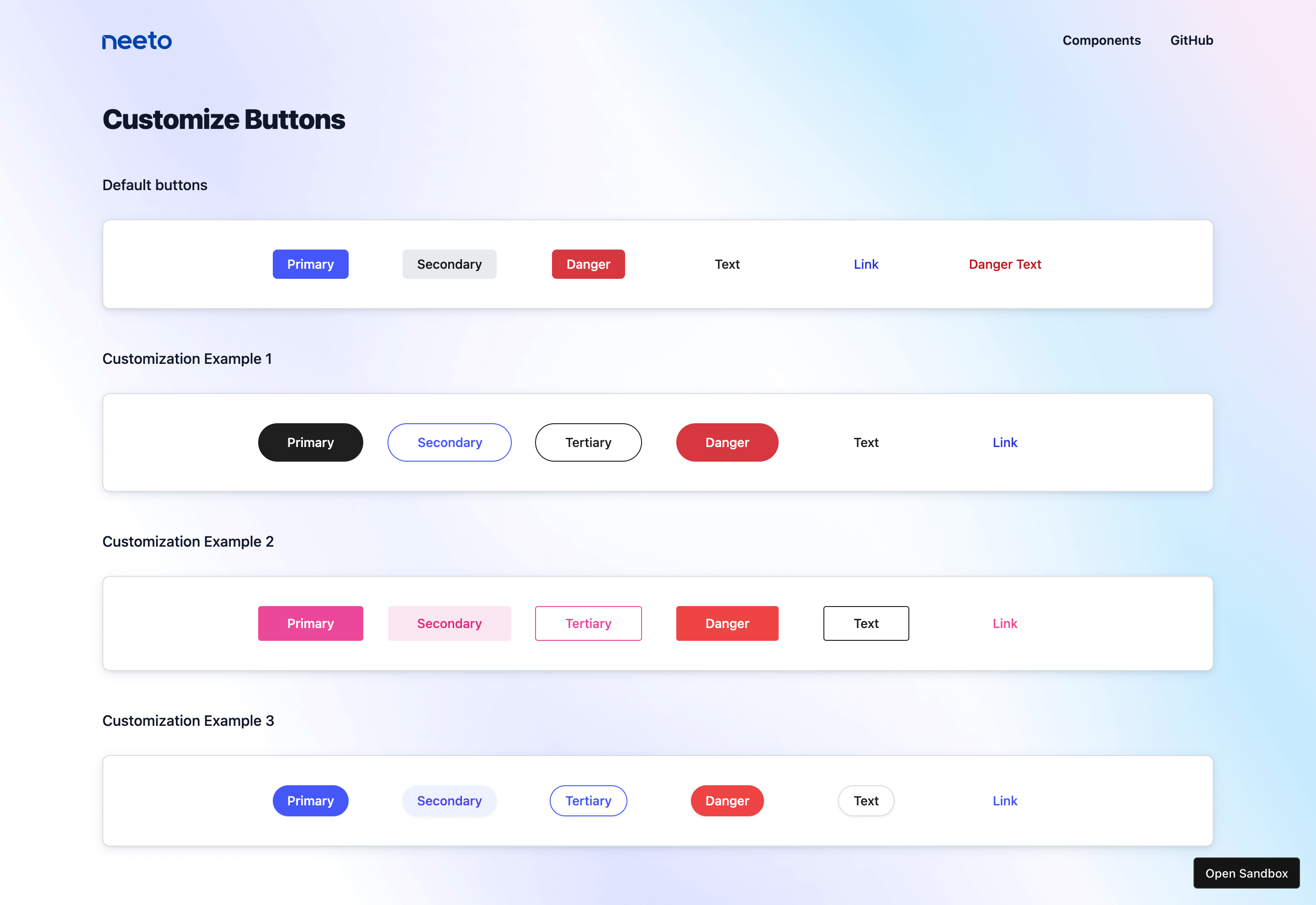

In the conversation page of our neetoChat application,when listing each message in a conversation, we annotate each message with a"time-ago" label. This label indicates the duration since the message wasreceived and is expected to self-advance with passing time.

Normally, our first take on such implementation would be to use a setIntervaltimer inside the message component, which triggers the component to rerenderevery second to update the label. This becomes highly inefficient when we havehundreds of messages to be rendered on the screen at the same time.

The browser ends up running separate timers for each message to update theirlabel. Also, due to their asynchronous behavior, there is a higher chance thatthese timer events get stuck in the JS event loop and get fired at inappropriatemoments or get dropped altogether.

Using a single timer

An alternate approach could be to keep a single timer and a state on the messagelisting parent component. Then update the state on every passing second, andtrigger the entire list rerender. The obvious downside of this approach isrerendering a large conversation list and its children every single second. Thisis highly inappropriate and leads to unexpected stutter and other performanceissues.

What we wanted to achieve was to use a single timer that updates a single state,triggering the rerender of all the components that needs to be updated. In caseof neetoChat conversations, we needed to update "time-ago" labels alone, not theentire message component or any of its parent.

React's Context API was the mostappropriate choice at the time for this task. The Context API offers a simpleway of sharing states or values across different components. Whenever the valueor the state changes, all its subscribed components are immediately notified ofthe change and trigger a re-render. To use this approach, first, we extractedthe timer and the state to a Context. Then, all the components that need to beupdated with time are subscribed to this context value. The timer updates thecontext value and the subscribed components get rerendered.

import React, { createContext, useEffect, useRef, useMemo, useCallback,} from "react";const IntervalContext = createContext({});const defaultClockDelay = 10 * 1000; // 10 secondsexport const IntervalProvider = ({ children }) => { const subscriptions = useRef(new Map()).current; useEffect(() => { const interval = setInterval(() => { const now = Date.now(); for (const subscription of subscriptions.values()) { // Check if delay is elapsed if (now < subscription.time) return; subscription.callback(now); // Set next callback time for the subscription. subscription.time = now + subscription.delay; } }, defaultClockDelay); return () => { clearInterval(interval); }; }, [subscriptions]); const subscribe = useCallback( (callback, delay = defaultClockDelay) => { if (typeof callback !== "function") return undefined; const subscription = { callback, delay, time: Date.now() + delay }; subscriptions.set(subscription, subscription); //unsubscribe callback return () => subscriptions.delete(subscription); }, [subscriptions] ); const contextValue = useMemo(() => ({ subscribe }), [subscribe]); return ( <IntervalContext.Provider value={contextValue}> {children} </IntervalContext.Provider> );};export default IntervalContext;The above context exposes a subscribe method that accepts a callback and adelay, which is added to the list of subscriptions. During each interval, we areiterating through the list of subscriptions and will invoke those callbacks forwhich the specified delay has elapsed.

To integrate this universal timer into the individual components easily, we havealso added a hook that wraps around the common subscription and cleanup logic.

import { useContext, useEffect, useState } from "react";import IntervalContext from "contexts/interval";const useInterval = delay => { const [state, setState] = useState(Date.now()); const { subscribe } = useContext(IntervalContext); useEffect(() => { const unsubscribe = subscribe(now => setState(now), delay); return unsubscribe; }, [delay, subscribe]); return state;};export default useInterval;Now, the component integration require only minimal configuration.

import { timeFormat } from "neetocommons/utils";const TimeAgo = () => { useInterval(10000); // Rerender every 10 seconds // timeFormat.fromNow() returns the time // difference between given time and now. return <p>{timeFormat.fromNow(time)}</p>;};This way only the "time-ago" label components are updated every 10 seconds whilethe parent message components remain unaffected by these updates.

Using a global store

As soon as that work was finished our development guidelines were updated toreflect that we should use zustand for allshared state usages. The above universal timer implementation was refactored touse a zustand store instead of React Context.

import { useEffect, useMemo } from "react";import { isEmpty, omit, prop } from "ramda";import { v4 as uuid } from "uuid";import { create } from "zustand";const useTimerStore = create(() => ({}));// Interval is created directly inside the module body,// outside the components and hooks.setInterval(() => { const currentState = useTimerStore.getState(); const nextState = {}; const now = Date.now(); for (const key in currentState) { const { lastUpdated, interval } = currentState[key]; // Check if delay is elapsed. const shouldUpdate = now - lastUpdated >= interval; if (shouldUpdate) nextState[key] = { lastUpdated: now, interval }; } if (!isEmpty(nextState)) useTimerStore.setState(nextState);}, 1000);// `useInterval` was changed to `useTimer`.const useTimer = (interval = 60) => { const key = useMemo(uuid, []); useEffect(() => { useTimerStore.setState({ [key]: { lastUpdated: Date.now(), interval: 1000 * interval, // convert seconds to ms }, }); return () => useTimerStore.setState(omit([key], useTimerStore.getState()), true); }, [interval, key]); return useTimerStore(prop(key));};export default useTimer;zustand store allows access and updates to store values imperatively, outsidethe render by calling the getState() and setState() methods.

An improved version

In the latest iteration of useTimer hook, we decided to cut down on the externaldependency zustand and instead migrate the implementation to use React's newuseSyncExternalStorehook. The useSyncExternalStore hook basically allows you to derive a Reactstate from external change events.

import { useRef, useSyncExternalStore } from "react";import { isNotEmpty } from "neetocist";const subscriptions = [];let interval = null;const initiateInterval = () => { // Create new interval if there are no existing subscriptions. if (isNotEmpty(subscriptions)) return; interval = setInterval(() => { subscriptions.forEach(callback => callback()); }, 1000);};const cleanupInterval = () => { // Cleanup existing interval if there are no more subscriptions if (isNotEmpty(subscriptions)) return; clearInterval(interval);};const subscribe = callback => { initiateInterval(); subscriptions.push(callback); // Runs on unmout. Remove subscription from the list. return () => { subscriptions.splice(subscriptions.indexOf(callback), 1); cleanupInterval(); };};const useTimer = (delay = 60) => { const lastUpdatedRef = useRef(Date.now()); return useSyncExternalStore(subscribe, () => { const now = Date.now(); let lastUpdated = lastUpdatedRef.current; // Calculate the time difference to derive new state // If specified delay elapsed, return new value for the state. If not, return last value (no state change) if (now - lastUpdated >= delay * 1000) lastUpdated = now; lastUpdatedRef.current = lastUpdated; return lastUpdated; });};In summary, when useTimer hook is invoked with a delay, the callback is addedto the list of subscriptions and executed when the specified delay has elapsed.On unmount, the subscription is removed from the list of subscriptions. Incontrast to previous versions, the new version is much cleaner and has the addedbenefit of running the interval timer only when required. The timer is addedonly when the first subscription is added and removed when all subscriptionshave been completed.